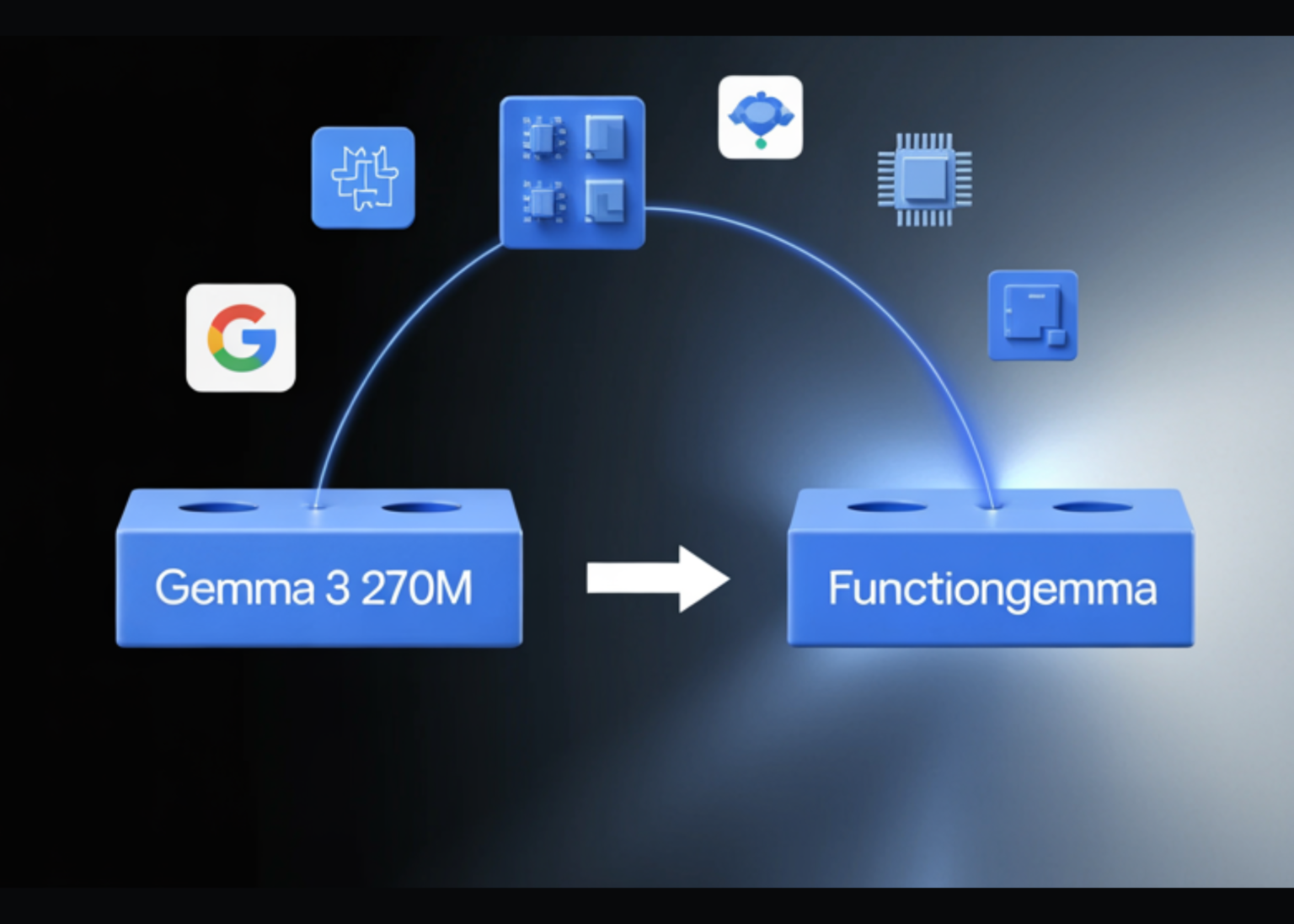

Google has released FunctionGemma, a specialized version of the Gemma 3 270M model that is trained specifically for function calling and designed to run as an edge agent that maps natural language to executable API actions.

But, What is FunctionGemma?

FunctionGemma is a 270M parameter text only transformer based on Gemma 3 270M. It keeps the same architecture as Gemma 3 and is released as an open model under the Gemma license, but the training objective and chat format are dedicated to function calling rather than free form dialogue.

The model is intended to be fine tuned for specific function calling tasks. It is not positioned as a general chat assistant. The primary design goal is to translate user instructions and tool definitions into structured function calls, then optionally summarize tool responses for the user.

From an interface perspective, FunctionGemma is presented as a standard causal language model. Inputs and outputs are text sequences, with an input context of 32K tokens and an output budget of up to 32K tokens per request, shared with the input length.

Architecture and training data

The model uses the Gemma 3 transformer architecture and the same 270M parameter scale as Gemma 3 270M. The training and runtime stack reuse the research and infrastructure used for Gemini, including JAX and ML Pathways on large TPU clusters.

FunctionGemma uses Gemma’s 256K vocabulary, which is optimized for JSON structures and multilingual text. This improves token efficiency for function schemas and tool responses and reduces sequence length for edge deployments where latency and memory are tight.

The model is trained on 6T tokens, with a knowledge cutoff in August 2024. The dataset focuses on two main categories:

- public tool and API definitions

- tool use interactions that include prompts, function calls, function responses and natural language follow up messages that summarize outputs or request clarification

This training signal teaches both syntax, which function to call and how to format arguments, and intent, when to call a function and when to ask for more information.

Conversation format and control tokens

FunctionGemma does not use a free form chat format. It expects a strict conversation template that separates roles and tool related regions. Conversation turns are wrapped with role … where roles are typically developer, user or model.

Within those turns, FunctionGemma relies on a fixed set of control token pairs

- <tool> and </tool> for tool definitions

- <call> and </call> for the model’s tool calls

- <output> and </output> for serialized tool outputs

These markers let the model distinguish natural language text from function schemas and from execution results. The Hugging Face apply_chat_template API and the official Gemma templates generate this structure automatically for messages and tool lists.

Fine tuning and Mobile Actions performance

Out of the box, FunctionGemma is already trained for generic tool use. However, the official Mobile Actions guide and the model card emphasize that small models reach production level reliability only after task specific fine tuning.

The Mobile Actions demo uses a dataset where each example exposes a small set of tools for Android system operations, for example create a contact, set a calendar event, control the flashlight and map viewing. FunctionGemma learns to map utterances such as ‘Create a calendar event for lunch tomorrow’ or ‘Turn on the flashlight’ to those tools with structured arguments.

On the Mobile Actions evaluation, the base FunctionGemma model reaches 58 percent accuracy on a held out test set. After fine tuning with the public cookbook recipe, accuracy increases to 85 percent.

Edge agents and reference demos

The main deployment target for FunctionGemma is edge agents that run locally on phones, laptops and small accelerators such as NVIDIA Jetson Nano. The small parameter count, 0.3B, and support for quantization allow inference with low memory and low latency on consumer hardware.

Google ships several reference experiences through the Google AI Edge Gallery

- Mobile Actions shows a fully offline assistant style agent for device control using FunctionGemma fine tuned on the Mobile Actions dataset and deployed on device.

- Tiny Garden is a voice controlled game where the model decomposes commands such as “Plant sunflowers in the top row and water them” into domain specific functions like plant_seed and water_plots with explicit grid coordinates.

- FunctionGemma Physics Playground runs entirely in the browser using Transformers.js and lets users solve physics puzzles via natural language instructions that the model converts into simulation actions.

These demos validate that a 270M parameter function caller can support multi step logic on device without server calls, given appropriate fine tuning and tool interfaces.

Key Takeaways

- FunctionGemma is a 270M parameter, text only variant of Gemma 3 that is trained specifically for function calling, not for open ended chat, and is released as an open model under the Gemma terms of use.

- The model keeps the Gemma 3 transformer architecture and 256k token vocabulary, supports 32k tokens per request shared between input and output, and is trained on 6T tokens.

- FunctionGemma uses a strict chat template with role … and dedicated control tokens for function declarations, function calls and function responses, which is required for reliable tool use in production systems.

- On the Mobile Actions benchmark, accuracy improves from 58 percent for the base model to 85 percent after task specific fine tuning, showing that small function callers need domain data more than prompt engineering.

- The 270M scale and quantization support let FunctionGemma run on phones, laptops and Jetson class devices, and the model is already integrated into ecosystems such as Hugging Face, Vertex AI, LM Studio and edge demos like Mobile Actions, Tiny Garden and the Physics Playground.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. His most recent endeavor is the launch of an Artificial Intelligence Media Platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news that is both technically sound and easily understandable by a wide audience. The platform boasts of over 2 million monthly views, illustrating its popularity among audiences.